Overview

I'm presenting here the technical aspects of setting up a small-scale testing lab in my basement, using as little hardware as possible, and keeping costs to a minimum. For one thing, systems needed to be mobile if possible, easy to replace, and as flexible as possible to support various testing scenarios. I may wish to bring part of this network with me on short trips to give a talk, for example.One of the core aspects of this lab is its use of the network. I have former experience with Cisco hardware, so I picked some relatively cheap devices off eBay: a decent layer 3 switch (Cisco C3750, 24 ports, with PoE support in case I'd want to start using that), a small Cisco ASA 5505 to act as a router. The router's configuration is basic, just enough to make sure this lab can be isolated behind a firewall, and have an IP on all networks. The switch's config is even simpler, and consists in setting up VLANs for each segment of the lab (different networks for different things). It connects infrastructure (the MAAS server, other systems that just need to always be up) via 802.1q trunks; the servers are configured with IPs on each appropriate VLAN. VLAN 1 is my "normal" home network, so that things will work correctly even when not supporting VLANs (which means VLAN 1 is set to the the native VLAN and to be untagged wherever appropriate). VLAN 10 is "staging", for use with my own custom boot server. VLAN 15 is "sandbox" for use with MAAS. The switch is only powered on when necessary, to save on electricity costs and to avoid hearing its whine (since I work in the same room). This means it is usually powered off, as the ASA already provides many ethernet ports. The telco rack in use was salvaged, and so were most brackets, except for the specialized bracket for the ASA which was bought separately. Total costs for this setup is estimated to about 500$, since everything comes from cheap eBay listings or salvaged, reused equipment.

The Cisco hardware was specifically selected because I had prior experience with them, so I could make sure the features I wanted were supported: VLANs, basic routing, and logs I can make sense of. Any hardware could do -- VLANs aren't absolutely required, but given many network ports on a switch, it tends to avoid requiring multiple switches instead.

My main DNS / DHCP / boot server is a raspberry pi 2. It serves both the home network and the staging network. DNS is set up such that the home network can resolve any names on any of the networks: using home.example.com or staging.example.com, or even maas.example.com as a domain name following the name of the system. Name resolution for the maas.example.com domain is forwarded to the MAAS server. More on all of this later.

The MAAS server has been set up on an old Thinkpad X230 (my former work laptop); I've been routinely using it (and reinstalling it) for various tests, but that meant reinstalling often, possibly conflicting with other projects if I tried to test more than one thing at a time. It was repurposed to just run Ubuntu 18.04, with a MAAS region and rack controller installed, along with libvirt (qemu) available over the network to remotely start virtual machines. It is connected to both VLAN 10 and VLAN 15.

Additional testing hardware can be attached to either VLAN 10 or VLAN 15 as appropriate -- the C3750 is configured so "top" ports are in VLAN 10, and "bottom" ports are in VLAN 15, for convenience. The first four ports are configured as trunk ports if necessary. I do use a Dell Vostro V130 and a generic Acer Aspire laptop for testing "on hardware". They are connected to the switch only when needed.

Finally, "clients" for the lab may be connected anywhere (but are likely to be on the "home" network). They are able to reach the MAAS web UI directly, or can use MAAS CLI or any other features to deploy systems from the MAAS servers' libvirt installation.

Setting up the network hardware

I will avoid going into the details of the Cisco hardware too much; configuration is specific to this hardware. The ASA has a restrictive firewall that blocks off most things, and allows SSH and HTTP access. Things that need access the internet go through the MAAS internal proxy.For simplicity, the ASA is always .1 in any subnet, the switch is .2 when it is required (and was made accessible over serial cable from the MAAS server). The rasberrypi is always .5, and the MAAS server is always .25. DHCP ranges were designed to reserve anything .25 and below for static assignments on the staging and sandbox networks, and since I use a /23 subnet for home, half is for static assignments, and the other half is for DHCP there.

MAAS server hardware setup

Netplan is used to configure the network on Ubuntu systems. The MAAS server's configuration looks like this:network:

ethernets:

enp0s25:

addresses: []

dhcp4: true

optional: true

bridges:

maasbr0:

addresses: [ 10.3.99.25/24 ]

dhcp4: no

dhcp6: no

interfaces: [ vlan15 ]

staging:

addresses: [ 10.3.98.25/24 ]

dhcp4: no

dhcp6: no

interfaces: [ vlan10 ]

vlans:

vlan15:

dhcp4: no

dhcp6: no

accept-ra: no

id: 15

link: enp0s25

vlan10:

dhcp4: no

dhcp6: no

accept-ra: no

id: 10

link: enp0s25

version: 2

Both VLANs are behind bridges as to allow setting virtual machines on any network. Additional configuration files were added to define these bridges for libvirt (/etc/libvirt/qemu/networks/maasbr0.xml):

<network>

<name>maasbr0</name>

<bridge name="maasbr0">

<forward mode="bridge">

</forward></bridge></network>

Libvirt also needs to be accessible from the network, so that MAAS can drive it using the "pod" feature. Uncomment "listen_tcp = 1", and set authentication as you see fit, in /etc/libvirt/libvirtd.conf. Also set:

libvirtd_opts="-l"

In /etc/default/libvirtd, then restart the libvirtd service.

dnsmasq server

The raspberrypi has similar netplan config, but sets up static addresses on all interfaces (since it is the DHCP server). Here, dnsmasq is used to provide DNS, DHCP, and TFTP. The configuration is in multiple files; but here are some of the important parts:

dhcp-leasefile=/depot/dnsmasq/dnsmasq.leases

dhcp-hostsdir=/depot/dnsmasq/reservations

dhcp-authoritative

dhcp-fqdn

# copied from maas, specify boot files per-arch.

dhcp-boot=tag:x86_64-efi,bootx64.efi

dhcp-boot=tag:i386-pc,pxelinux

dhcp-match=set:i386-pc, option:client-arch, 0 #x86-32

dhcp-match=set:x86_64-efi, option:client-arch, 7 #EFI x86-64

# pass search domains everywhere, it's easier to type short names

dhcp-option=119,home.example.com,staging.example.com,maas.example.com

domain=example.com

no-hosts

addn-hosts=/depot/dnsmasq/dns/

domain-needed

expand-hosts

no-resolv

# home network

domain=home.example.com,10.3.0.0/23

auth-zone=home.example.com,10.3.0.0/23

dhcp-range=set:home,10.3.1.50,10.3.1.250,255.255.254.0,8h

# specify the default gw / next router

dhcp-option=tag:home,3,10.3.0.1

# define the tftp server

dhcp-option=tag:home,66,10.3.0.5

# staging is configured as above, but on 10.3.98.0/24.

# maas.example.com: "isolated" maas network.

# send all DNS requests for X.maas.example.com to 10.3.99.25 (maas server)

server=/maas.example.com/10.3.99.25

# very basic tftp config

enable-tftp

tftp-root=/depot/tftp

tftp-no-fail

# set some "upstream" nameservers for general name resolution.

server=8.8.8.8

server=8.8.4.4

DHCP reservations (to avoid IPs changing across reboots for some systems I know I'll want to reach regularly) are kept in /depot/dnsmasq/reservations (as per the above), and look like this:

de:ad:be:ef:ca:fe,10.3.0.21

I did put one per file, with meaningful filenames. This helps with debugging and making changes when network cards are changed, etc. The names used for the files do not match DNS names, but instead are a short description of the device (such as "thinkpad-x230"), since I may want to rename things later.

Similarly, files in /depot/dnsmasq/dns have names describing the hardware, but then contain entries in hosts file form:

10.3.0.21 izanagi

Again, this is used so any rename of a device only requires changing the content of a single file in /depot/dnsmasq/dns, rather than also requiring renaming other files, or matching MAC addresses to make sure the right change is made.

Installing MAAS

At this point, the configuration for the networking should already be completed, and libvirt should be ready and accessible from the network.

The MAAS installation process is very straightforward. Simply install the maas package, which will pull in maas-rack-controller and maas-region-controller.

Once the configuration is complete, you can log in to the web interface. Use it to make sure, under Subnets, that only the MAAS-driven VLAN has DHCP enabled. To enable or disable DHCP, click the link in the VLAN column, and use the "Take action" menu to provide or disable DHCP.

This is necessary if you do not want MAAS to fully manage all of the network and provide DNS and DHCP for all systems. In my case, I am leaving MAAS in its own isolated network since I would keep the server offline if I do not need it (and the home network needs to keep working if I'm away).

Some extra modifications were made to the stock MAAS configuration to change the behavior of deployed systems. For example; I often test packages in -proposed, so it is convenient to have that enabled by default, with the archive pinned to avoid accidentally installing these packages. Given that I also do netplan development and might try things that would break the network connectivity, I also make sure there is a static password for the 'ubuntu' user, and that I have my own account created (again, with a static, known, and stupidly simple password) so I can connect to the deployed systems on their console. I have added the following to /etc/maas/preseed/curtin_userdata:

late_commands:

[...]

pinning_00: ["curtin", "in-target", "--", "sh", "-c", "/bin/echo 'Package: *' >> /etc/apt/preferences.d/proposed"]

pinning_01: ["curtin", "in-target", "--", "sh", "-c", "/bin/echo 'Pin: release a={{release}}-proposed' >> /etc/apt/preferences.d/proposed"]

pinning_02: ["curtin", "in-target", "--", "sh", "-c", "/bin/echo 'Pin-Priority: -1' >> /etc/apt/preferences.d/proposed"]

apt:

sources:

proposed.list:

source: deb $MIRROR {{release}}-proposed main universe

write_files:

userconfig:

path: /etc/cloud/cloud.cfg.d/99-users.cfg

content: |

system_info:

default_user:

lock_passwd: False

plain_text_passwd: [REDACTED]

users:

- default

- name: mtrudel

groups: sudo

gecos: Matt

shell: /bin/bash

lock-passwd: False

passwd: [REDACTED]

The pinning_ entries are simply added to the end of the "late_commands" section.

For the libvirt instance, you will need to add it to MAAS using the maas CLI tool. For this, you will need to get your MAAS API key from the web UI (click your username, then look under MAAS keys), and run the following commands:

maas login local http://localhost:5240/MAAS/ [your MAAS API key]

maas local pods create type=virsh power_address="qemu+tcp://127.0.1.1/system"

The pod will be given a name automatically; you'll then be able to use the web interface to "compose" new machines and control them via MAAS. If you want to remotely use the systems' Spice graphical console, you may need to change settings for the VM to allow Spice connections on all interfaces, and power it off and on again.

Setting up the client

Deployed hosts are now reachable normally over SSH by using their fully-qualified name, and specifying to use the ubuntu user (or another user you already configured):

ssh ubuntu@vocal-toad.maas.example.com

There is an inconvenience with using MAAS to control virtual machines like this, they are easy to reinstall, so their host hashes will change frequently if you access them via SSH. There's a way around that, using a specially crafted ssh_config (~/.ssh/config). Here, I'm sharing the relevant parts of the configuration file I use:

CanonicalDomains home.example.com

CanonicalizeHostname yes

CanonicalizeFallbackLocal no

HashKnownHosts no

UseRoaming no

# canonicalize* options seem to break github for some reason

# I haven't spent much time looking into it, so let's make sure it will go through the

# DNS resolution logic in SSH correctly.

Host github.com

Hostname github.com.

Host *.maas

Hostname %h.example.com

Host *.staging

Hostname %h.example.com

Host *.maas.example.com

User ubuntu

StrictHostKeyChecking no

UserKnownHostsFile /dev/null

Host *.staging.example.com

StrictHostKeyChecking no

UserKnownHostsFile /dev/null

Host *.lxd

StrictHostKeyChecking no

UserKnownHostsFile /dev/null

ProxyCommand nc $(lxc list -c s4 $(basename %h .lxd) | grep RUNNING | cut -d' ' -f4) %p

Host *.libvirt

StrictHostKeyChecking no

UserKnownHostsFile /dev/null

ProxyCommand nc $(virsh domifaddr $(basename %h .libvirt) | grep ipv4 | sed 's/.* //; s,/.*,,') %p

As a bonus, I have included some code that makes it easy to SSH to local libvirt systems or lxd containers.

The net effect is that I can avoid having the warnings about changed hashes for MAAS-controlled systems and machines in the staging network, but keep getting them for all other systems.

Now, this means that to reach a host on the MAAS network, a client system only needs to use the short name with .maas tacked on:

vocal-toad.maas

And the system will be reachable, and you will not have any warning about known host hashes (but do note that this is specific to a sandbox environment, you definitely want to see such warnings in a production environment, as it can indicate that the system you are connecting to might not be the one you think).

It's not bad, but the goal would be to use just the short names. I am working around this using a tiny script:

#!/bin/sh

ssh $@.maas

And I saved this as "sandbox" in ~/bin and making it executable.

And with this, the lab is ready.

Usage

To connect to a deployed system, one can now do the following:

$ sandbox vocal-toad

Warning: Permanently added 'vocal-toad.maas.example.com,10.3.99.12' (ECDSA) to the list of known hosts.

Welcome to Ubuntu Cosmic Cuttlefish (development branch) (GNU/Linux 4.15.0-21-generic x86_64)

[...]

ubuntu@vocal-toad:~$

ubuntu@vocal-toad:~$ id mtrudel

uid=1000(mtrudel) gid=1000(mtrudel) groups=1000(mtrudel),27(sudo)

Mobility

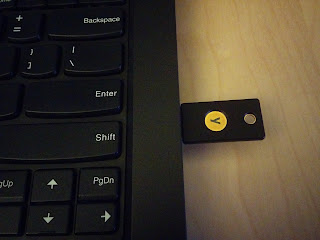

One important point for me was the mobility of the lab. While some of the network infrastructure must remain in place, I am able to undock the Thinkpad X230 (the MAAS server), and connect it via wireless to an external network. It will continue to "manage" or otherwise control VLAN 15 on the wired interface. In these cases, I bring another small configurable switch: a Cisco Catalyst 2960 (8 ports + 1), which is set up with the VLANs. A client could then be connected directly on VLAN 15 behind the MAAS server, and is free to make use of the MAAS proxy service to reach the internet. This allows me to bring the MAAS server along with all its virtual machines, as well as to be able to deploy new systems by connecting them to the switch. Both systems fit easily in a standard laptop bag along with another laptop (a "client").

All the systems used in the "semi-permanent" form of this lab can easily run on a single home power outlet, so issues are unlikely to arise in mobile form. The smaller switch is rated for 0.5amp, and two laptops do not pull very much power.

Next steps

One of the issues that remains with this setup is that it is limited to either starting MAAS images or starting images that are custom built and hooked up to the raspberry pi, which leads to a high effort to integrate new images:

- Custom (desktop?) images could be loaded into MAAS, to facilitate starting a desktop build.

- Automate customizing installed packages based on tags applied to the machines.

- juju would shine there; it can deploy workloads based on available machines in MAAS with the specified tags.

- Also install a generic system with customized packages, not necessarily single workloads, and/or install extra packages after the initial system deployment.

- This could be done using chef or puppet, but will require setting up the infrastructure for it.

- Integrate automatic installation of snaps.

- Load new images into the raspberry pi automatically for netboot / preseeded installs

- I have scripts for this, but they will take time to adapt

- Space on such a device is at a premium, there must be some culling of old images